VAST Open Source Month | TripoSG & TripoSF, Setting a New SOTA in 3D Generation

In March 2024, VAST and Stability AI jointly open-sourced the large-scale 3D model, TripoSR. With its revolutionary ability to generate a 3D model from a single image in just 0.5 seconds, it quickly became the go-to tool for 3D creators worldwide.

That same year, open-source projects kept pushing the boundaries of the AI industry, fueling rapid growth in both academic research and commercial applications.

VAST further advanced its Tripo series by launching Tripo 2.0 in September 2024 and Tripo 2.5 in January 2025. Trained on tens of millions of high-quality native 3D assets, these iterations consistently broke new ground in generation speed, model accuracy, and overall success—each with extraordinary geometric precision that redefined the frontiers of 3D model creation.

In our global pursuit of technological advancement, we understand that disruptive innovations in foundational architectures and breakthroughs in model capabilities are essential for foundational model teams. While we continuously refine Tripo into an ever-more "perfect solution" in a closed environment, we believe it is even more important to transform ourselves into a "fundamental building block" within the open-source ecosystem. An open technical ecosystem holds far greater long-term value than a closed system.

With that in mind, in March 2025, we launched our "Technology Open-Source Month" initiative.

We plan to sequentially open‑source eight major projects spanning the entire technical chain—from foundational generation models and core functional components to explorations of innovative ideas. Our ambition is to build the world's first end-to-end open-source 3D generation system, and we sincerely hope that researchers and developers in the 3D generation will find our work both inspiring and valuable.

Now, VAST is releasing two foundational 3D generation models:

TripoSG and TripoSF.

TripoSG's Major Upgrade: The First MoE Transformer Architecture in 3D Generation

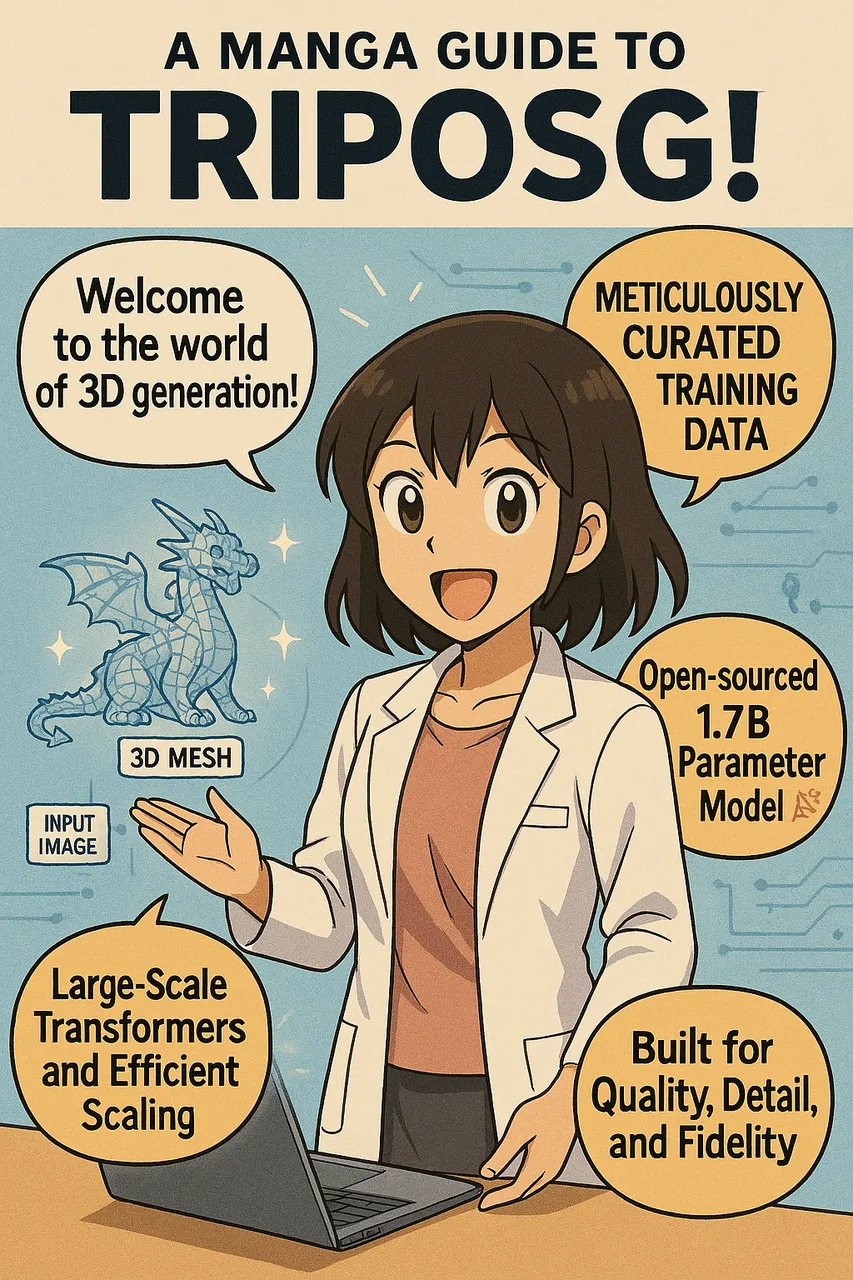

TripoSG is a foundational 3D generation model built on a Rectified Flow (RF) based MoE Transformer architecture. In this release, we open-source the weights and inference code for the 1.5B-parameter TripoSG model, which you can try out through an interactive demo on HuggingFace.

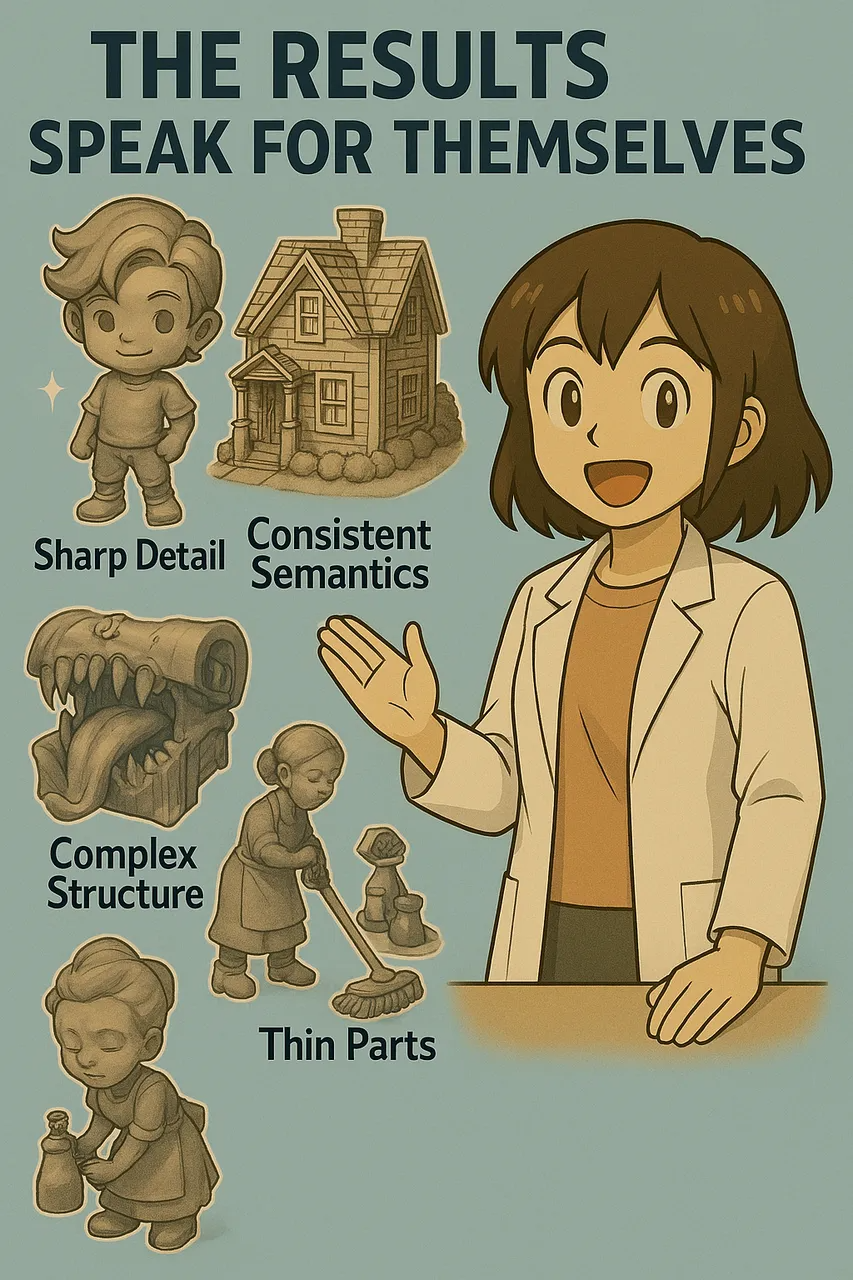

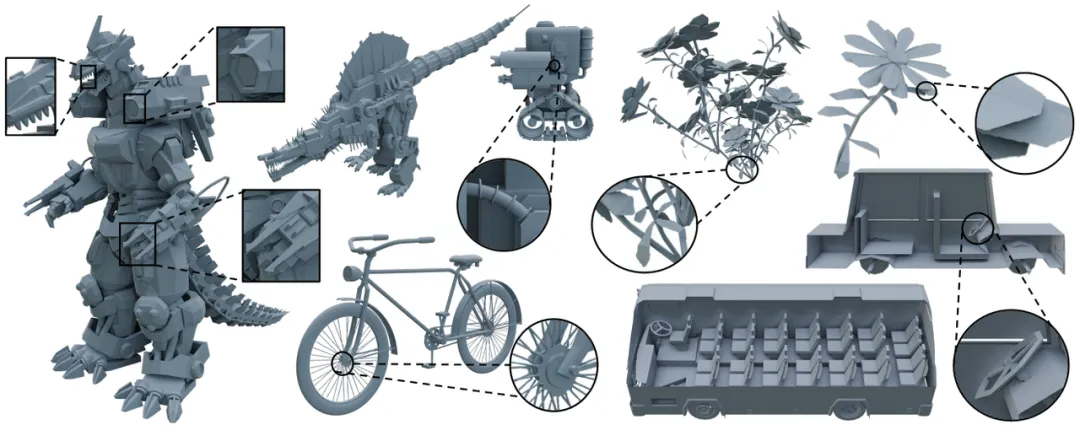

Tests have shown that TripoSG's output quality is on par with Tripo 2.0—surpassing all existing open-source 3D generation projects. Its standout advantages include excellent generalization and high stability when generating complex composite objects.

Adhering to the Scaling Law, leveraging higher-quality data, and utilizing larger models remain the key factors behind TripoSG's success. Here are four key innovations in efficient training, architecture design, and data governance:

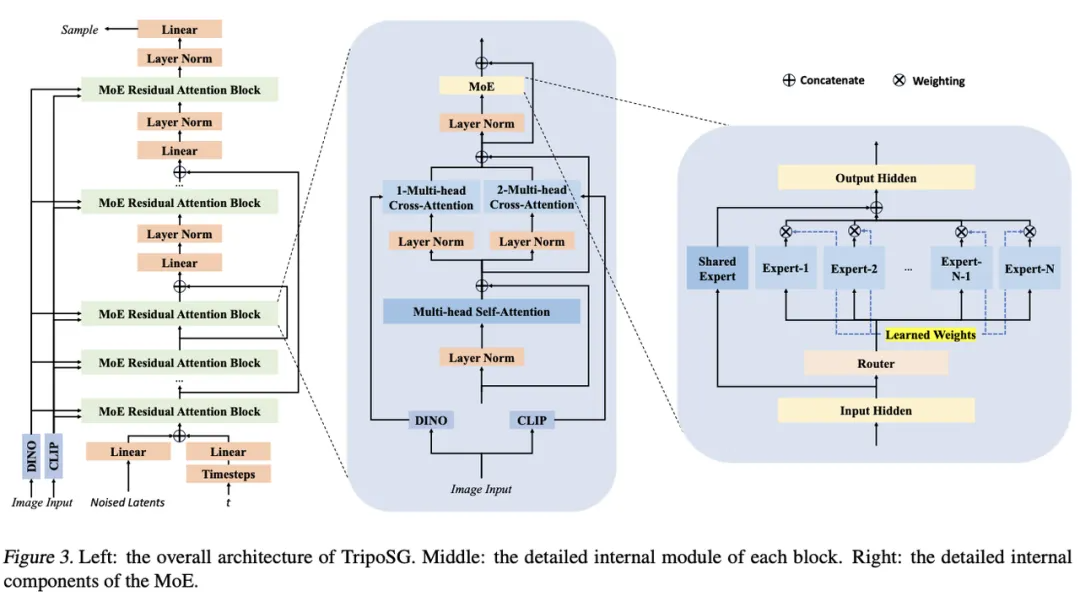

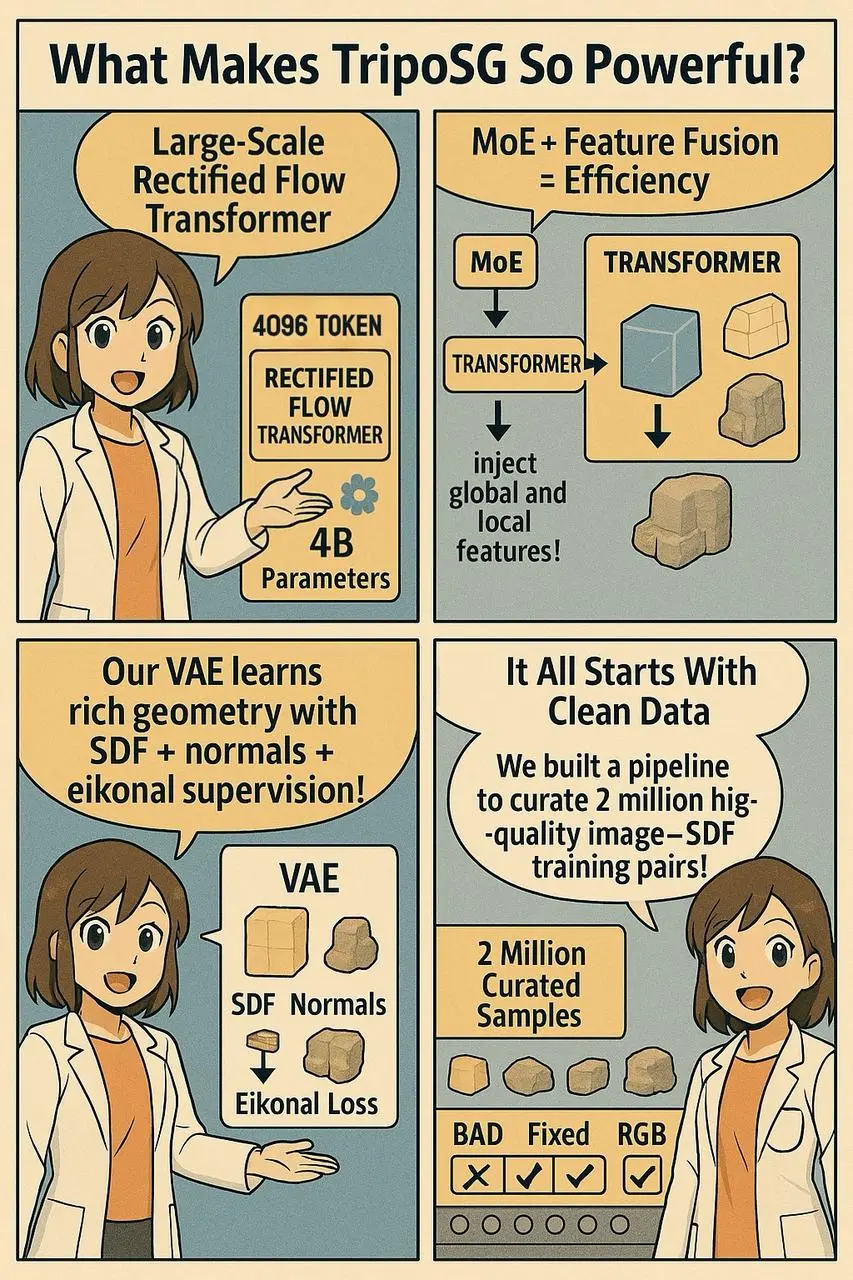

1. Pioneering the Use of a RF-Based Transformer for 3D Shape Generation

From the early days of Tripo 2.0's development, we discovered that compared to traditional diffusion models, Rectified Flow offers a more straightforward linear path between noise and data. This results in more stable and efficient training—and when combined with DiT, it significantly enhances model stability.

2. Introducing the First MoE Transformer in 3D for Better Scaling

Although MoE Transformers have been used in language, image, and video models, TripoSG marks the first efficient application in the 3D domain. This approach dramatically increases the model's parameter capacity—especially in the deeper, more critical layers—without adding substantial inference cost.

Additionally, built on the Transformer framework, TripoSG incorporates key enhancements such as skip-connections to improve cross-layer feature fusion. An independent cross-attention mechanism also efficiently injects global (CLIP) and local (DINOv2) image features, ensuring precise alignment between input 2D images and the generated 3D shapes.

Additionally, built on the Transformer framework, TripoSG incorporates key enhancements such as skip-connections to improve cross-layer feature fusion. An independent cross-attention mechanism also efficiently injects global (CLIP) and local (DINOv2) image features, ensuring precise alignment between input 2D images and the generated 3D shapes.

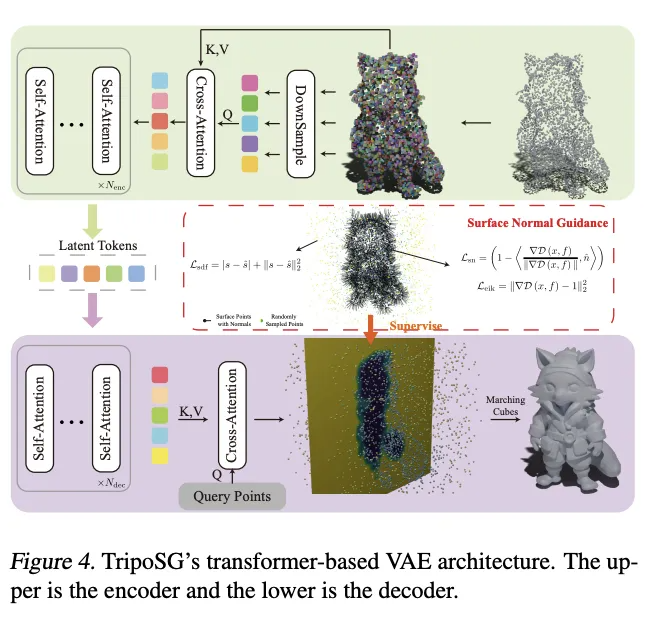

3. Enhancing Geometric Representation with a High-Quality VAE and Innovative Geometric Supervision

We have continuously pursued better geometric representations. In TripoSG, we adopted a VAE that uses Signed Distance Functions (SDFs) for geometric encoding, which offers higher precision than the previously popular occupancy grids. Moreover, the Transformer-based VAE architecture generalizes exceptionally well across resolutions, handling high-resolution inputs without the need for retraining.

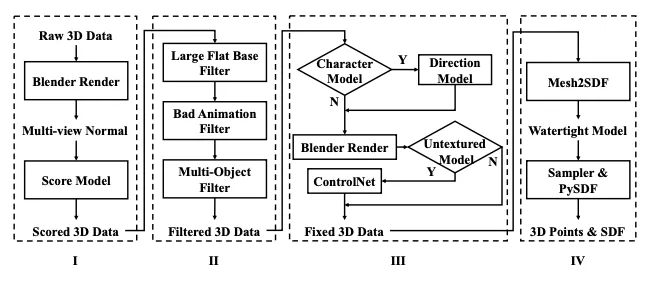

4. Emphasizing Data Governance with a Comprehensive Data Construction Pipeline

Both data quality and quantity are crucial. VAST possesses the largest collection of high-quality native 3D data globally and has developed an end-to-end data governance pipeline for the open-source community.

The process includes: Quality Scoring → Data Filtering → Fixing & Augmentation → SDF Production

Using this pipeline, we built a dataset of 2 million high-quality "image-SDF" training pairs. Ablation studies clearly demonstrate that models trained on this refined dataset significantly outperform those trained on larger, unfiltered raw datasets.

TripoSF Unlocks Internal 3D Structure Generation: A Breakthrough Tokenizer Achieves New SOTA in 3D Generation

TripoSF is a foundational 3D model developed by VAST based on a novel 3D representation called SparseFlex.

Testing reveals that its results surpass all existing open-source and closed-source work. We are open-sourcing the pre-trained VAE model and related inference code for TripoSF, with the full, "all-out" version to be unveiled in Tripo 3.0.

TripoSF redefines the "upper bound of model quality." For the first time, the model can generate not only the "back" of an object but also its "interior structure" (as seen in the bus seat and driver cabin examples).

Furthermore, while previous works tended to generate clothing or petals with overly thick geometries, TripoSF handles open-surface assets with exceptional finesse.

Its rich detailing in other model categories is unprecedented.

The primary objective in developing TripoSF was to break through the traditional bottlenecks in 3D modeling related to detail, complex structures, and scalability. Past methods often suffered from detail loss during preprocessing, inadequate expression of complex geometries, or exorbitant memory and computation costs at high resolutions. Our search for a tokenizer that can push the limits of 3D generation led to the development of SparseFlex—a significant step forward.

SparseFlex leverages the strengths of Flexicubes—which can differentiably extract meshes with sharp features—while innovatively introducing a sparse voxel structure that stores and computes voxel information only near object surfaces. The benefits are significant:

- Significantly Reduced Memory Usage: Enables TripoSF to train and infer at a high resolution of 1024³.

- Native Support for Arbitrary Topologies: By omitting voxels in empty regions, it naturally represents open surfaces (such as fabrics and leaves) while effectively capturing internal structures.

- Direct Optimization via Rendering Loss: SparseFlex is differentiable, allowing TripoSF to use rendering loss for end-to-end training and avoiding detail degradation caused by data conversion (e.g., watertightness adjustments).

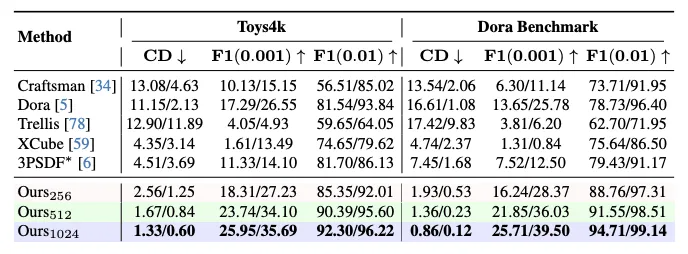

Experimental results indicate that TripoSF sets a new state-of-the-art. On multiple standard benchmarks, TripoSF achieved roughly an 82% reduction in Chamfer Distance and an 88% improvement in F-score compared to previous methods.

Resources

【TripoSG 】

【 TripoSF 】

Further updates and enhancements for our open-source projects will be posted promptly on VAST AI Research's official GitHub, HuggingFace, and X (formerly Twitter):

In addition to these open-source projects, the tools available on the Tripo Web and our cost-effective API offer seamless access to the latest model services provided by VAST.

For any technical or academic suggestions and collaborations, please feel free to reach out to us at research@vastai3d.com.

A scanner can't capture every crevice on the far side of the moon, but in the wilderness there are always those who toil at the mines. The sound of pickaxes striking the earth echoes continuously until one day it all merges into one—a resounding testament that open source is like a pickaxe hitting the ground, for on the far side of the moon where no map exists.

Explore More

- 3D Printer Ideas

- Where to Create 3D Prints Templates

- CAD 2D Drawing to 3D Web Animation

- 3D Model Ancient Human Form

Advancing 3D generation to new heights

moving at the speed of creativity, achieving the depths of imagination.

Text & Image to 3D models

Text & Image to 3D models Free Credits Monthly

Free Credits Monthly High-Fidelity Detail Preservation

High-Fidelity Detail Preservation