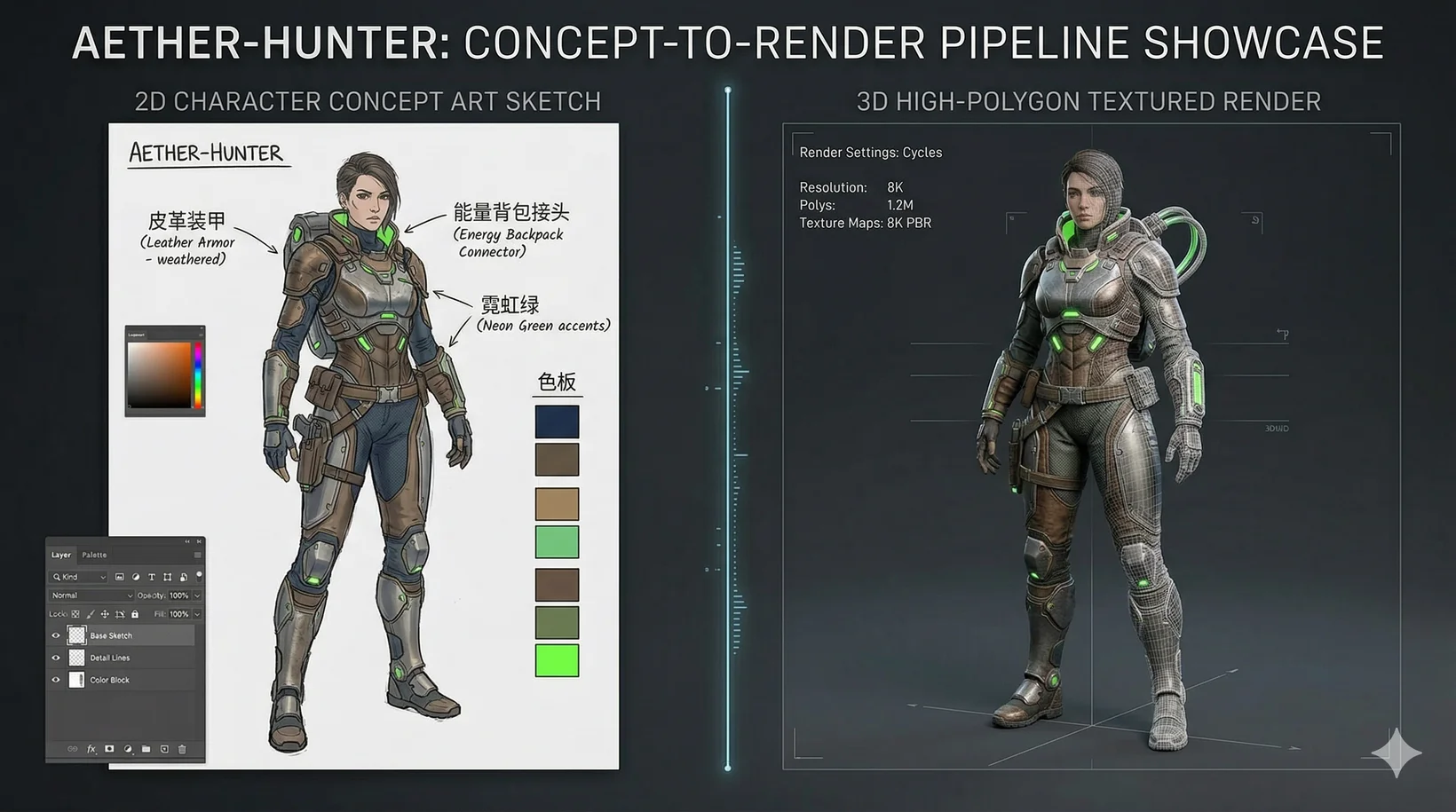

The Complete Blueprint for Converting 2D Character Concept Art to 3D Models Using AI for Game Development

A Professional Guide to AI-Powered Asset Generation Workflows

The interactive entertainment industry in 2026 demands rapid asset generation without sacrificing geometric precision or artistic fidelity. Converting 2d character concept art to 3d models using ai for game development has transitioned from an experimental concept into a foundational production pipeline. Tripo AI provides an end-to-end ecosystem that eliminates hours of manual retopology, UV mapping, and rigging, allowing studios to instantly transform flat illustrations into engine-ready interactive assets.

Key Insights:

- The transition to Algorithm 3.1 empowers creators with over 200 billion parameters for advanced structural accuracy and occlusion prediction.

- Automated workflows handle complex retopology, converting dense, millions-of-polygons meshes into optimized, animation-ready quad or triangle topology.

- Auto-Rigging technology instantly binds generated characters to anatomical skeletal frameworks for immediate T-pose exports to external software.

- Distinct and independent product lines ensure studios can scale operations securely via the Tripo API, functioning separately from the Tripo Studio web tool.

- Intelligent texture segmentation and the Magic Brush tool allow localized PBR material adjustments without disrupting overall mesh continuity or UV maps.

The 2026 Industry Standard for Asset Generation

The modern paradigm for converting 2d character concept art to 3d models using ai for game development relies on advanced neural architectures, specifically Algorithm 3.1, which utilizes over 200 billion parameters to achieve precise geometric fidelity and production-ready mesh generation.

In the fast-paced realm of interactive entertainment, developers constantly face the bottleneck of manual asset creation. Traditional pipelines of the past required specialized artists to interpret concept art, sculpt high-poly models, bake normal maps, and meticulously retopologize meshes for real-time engines—a process that often consumed weeks for a single hero asset. As of 2026, this workflow has been substantially revolutionized. The integration of 3D Generative AI into professional development pipelines ensures that studios can ingest raw concept sketches and output fully textured, mathematically sound assets in a fraction of the time, dramatically lowering the cost of artistic iteration.

The backbone of this transformation is Algorithm 3.1. By leveraging a massive scale of over 200 billion parameters, the system understands deep spatial relationships, volumetric occlusion, and complex anatomical proportions directly from flat imagery. This structural comprehension guarantees that characters maintain consistent silhouettes from every angle, effectively bridging the gap between two-dimensional artistic intent and three-dimensional technical execution. The massive parameter count allows the AI to predict hidden geometry accurately, ensuring that elements obscured in a 2D drawing are logically constructed in digital space. Consequently, studios bypass the initial blocking and high-poly sculpting phases entirely, jumping straight into engine implementation and gameplay testing.

Core Technologies Powering the Transformation Process

Smart mesh generation, intelligent part segmentation, and automated retopology ensure that generated characters are immediately usable in professional game engines, strictly maintaining optimal performance constraints.

When developers input visual data, the system performs a multi-layered semantic analysis to construct the base mesh. Intelligent part segmentation automatically divides the generated character into logical components—such as armor plating, underlying clothing fabrics, organic limbs, and handheld accessories—allowing for isolated editing and specific material assignment. This semantic breakdown is exceptionally crucial for modular character systems found in modern RPGs, where players might dynamically swap out gear or customize physical appearances without causing mesh clipping.

Following the initial high-fidelity generation, the Smart Low Poly feature executes an automated retopology pass. This sophisticated process reduces polygon counts from potentially millions of faces down to optimized targets while meticulously preserving crucial edge flow around deformation zones, such as elbows, knees, and facial structures. Proper edge loops are vital for smooth skeletal deformation during real-time animation. Users can select between quad-based or triangle-based topology depending on their specific rendering pipeline requirements. Furthermore, the generation algorithms prevent common manual artifacts such as non-manifold geometry, duplicate vertices, or inverted normals. The resulting models boast clean topology that rivals handcrafted assets, ensuring that strict memory budgets and draw call limits are respected. Supported export formats include USD, FBX, OBJ, STL, GLB, 3MF, facilitating seamless integration into any 3D Format Conversion pipeline.

Step-by-Step Workflow for Character Transformation

Successfully converting 2d character concept art to 3d models using ai for game development requires a systematic approach involving image preparation, AI generation, automated refinement, and targeted post-processing within the AI 3D Editor environment.

The process begins with preparing clear, high-resolution reference illustrations. Clean line art with strong silhouettes, neutral lighting, and minimal background clutter yields the most accurate geometric interpretation. Ideal concept art presents the character in a standard A-pose or T-pose to facilitate precise anatomical generation. Once uploaded into the interface, the platform's Image to 3D Model engine initiates the conversion. Within seconds, the system processes the visual data using Algorithm 3.1 and presents a fully materialized, textured asset in the interactive viewport. At this stage, developers can utilize advanced segmenting tools to refine specific regions or employ auto-fill capabilities to seamlessly patch any occluded areas from the original 2D concept.

For highly complex character designs, generating multiple views can significantly enhance the system's spatial understanding. The platform accommodates multi-view image inputs, ensuring that side, top, and back profiles align accurately with the primary front concept, eliminating any guesswork regarding unseen details. Following the structural generation, developers apply necessary polygon constraints. Applying classic face limit constraints keeps the assets optimized for real-time rendering environments, preventing hardware strain during active gameplay. The entire pipeline drastically reduces the technical complexity traditionally associated with digital sculpting, shifting the studio's focus toward high-level creative direction, engine integration, and iterative gameplay refinement.

Intelligent Texturing and Material Assignment

Advanced PBR generation and targeted editing tools like the Magic Brush apply highly detailed, physically accurate materials to generated meshes, ensuring realistic light interaction within game environments.

Visual fidelity extends far beyond geometric precision; material properties are equally critical for modern physical-based rendering (PBR) engines. The platform automatically generates comprehensive PBR maps alongside the mesh, including diffuse color, roughness, metallic, and normal maps, with support for up to 4K resolution. These specialized textures ensure that distinct surfaces—such as brushed aluminum armor, worn leather belts, or glossy plastic visors—respond authentically to dynamic lighting setups, cast accurate shadows, and interact seamlessly with global illumination systems within the virtual world.

When specific stylistic adjustments or localized repairs are necessary, the Magic Brush tool provides advanced control over targeted texturing. Instead of exporting the model to external, cumbersome 3D painting software, developers can isolate a specific UV island or model segment and apply prompt-driven AI Texture modifications directly in the browser interface. By adjusting the creativity strength slider, users dictate how strictly the AI adheres to the existing model versus introducing entirely new visual variations. This partial texture repainting eliminates visible texture seams where automated UV mapping creates breaks, harmonizes inconsistent ambient occlusion, and recovers microscopic details lost during the initial translation.

Rigging, Animation, and DCC Bridge Integration

To finalize the production pipeline, automated skeletal Rigging capabilities instantly prepare characters for animation, paired with direct DCC Bridge plugins for seamless export to standard game engines.

A static mesh serves limited purpose in interactive media; dynamic mobility is highly essential for game characters. This core necessity is addressed through a proprietary UniRig system, which automatically constructs a complex skeletal framework and assigns appropriate skin weights based on the character's unique anatomical structure. This sophisticated feature supports various character typologies, ranging from standard humanoid bipeds to complex quadrupedal creatures. Characters can be exported directly in a universally recognized T-pose, fully equipped with skeleton binding. This effectively eliminates the tedious manual placement of joints, complex inverse kinematics (IK) setups, and rigorous weight painting tasks that historically bottlenecked animation pipelines.

To further streamline the engine integration process, the DCC Bridge ecosystem connects the generation platform directly to major industry-standard software, including Unreal Engine and Unity. This lightweight plugin facilitates real-time model transfer without requiring manual file downloading, unzipping, or tedious directory management. The bridge autonomously handles format conversions, texture resolution optimization, and automatic material instance creation upon import. This direct pipeline ensures that the transition from web-based generation to an active game scene is highly efficient, allowing technical artists to immediately begin applying motion capture data or custom blend spaces.

FAQ

1. How does the Free plan work and what are its limitations?

The Free plan provides 300 credits per month, designed primarily for non-commercial evaluation. It is critical for developers to note that 3D models generated under Tripo's Free plan do not support commercial use.

2. What are the details of the Pro plan for professional production?

For professional production and retail game deployment, The Pro plan ($19.90/month) provides 3,000 credits per month alongside full commercial rights, allowing studios to confidently deploy generated assets directly into monetized commercial titles. For more information on Subscription Plans, please visit the official page.

3. Is the Tripo API included as a feature in the Tripo Studio subscription?

No. Tripo Studio, the creator-facing web-based interactive tool, and the Tripo API are independent product lines. The API service operates with its own distinct billing system, authentication protocols, and backend infrastructure; it is not treated as a mere add-on feature to Studio subscriptions. This strict separation ensures dedicated server infrastructure and predictable cost scaling.